Our PagerDuty search alert was one of our first user-contributed alerts. @darron dropped by our chat room and pointed places where small improvements to the alert could give a lot more information when jumping on a problem.

Over the past week, I talked with a few customers about how they were using PagerDuty alerts. They suggested a few more small improvements and noted that the parameters could be more clearly explained.

First, we’ve added the list of systems that generated messages, so you’ll immediately know which systems are affected (right from the email or text message). It’s in the search alert at the end of the description.

An important aspect of PagerDuty’s triggers is the ability to tie multiple alerts to the same incident, so they can be acted on as one problem. PagerDuty does this with the incident_key option, which

their documentation says it:

…identifies the incident to which this trigger event should be applied.

If there’s no open (i.e. unresolved) incident with this key, a new one

will be created. If there’s already an open incident with a matching key,

this event will be appended to that incident’s log. The event key provides

an easy way to “de-dup” problem reports. If this field isn’t provided,

PagerDuty will automatically open a new incident with a unique key.

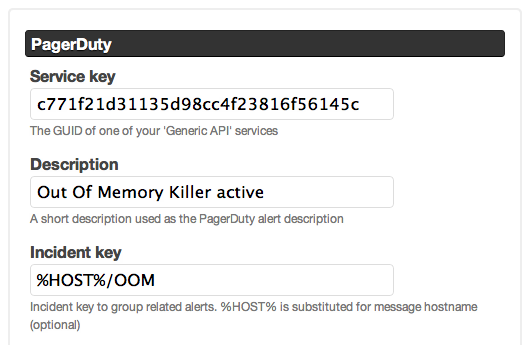

From reading that and our customers’ feedback, there’s no right answer about whether an incident key should be unique to a server or unique to a saved search. To deal with this, I introduced a token called %HOST% that can be used in the incident key.

If present, %HOST% will be replaced with the hostname of the system that

triggered the alert. This means if you have three hosts that were matched by a saved search, you can receive three PagerDuty alerts (or just one).

For instance, imagine a search alert that triggers when the Linux OOM Killer is invoked. It’s likely that each occurrence is a distinct server-specific problem, and a single Papertrail search alert can create separate host specific PagerDuty incident:

Contrast that with a search alert for a Web app error that could occur on dozens of Web servers. For that, the error is probably not host-specific and multiple occurrences probably are the same issue. The Papertrail search alert can update a single alert-specific PagerDuty incident.

To see how we implemented this alert, check out the source code on GitHub.

As always, drop us a line or say hi in our chat room if you have any more suggestions or just want to talk shop.