When you’re troubleshooting a problem or tracking down a bug in Python, the first place to look for clues related to server issues is in the application log files.

Python includes a robust logging module in the standard library, which provides a flexible framework for emitting log messages. This module is widely used by various Python libraries and is an important reference point for most programmers when it comes to logging.

The Python logging module provides a way for applications to configure different log handlers and provides a standard way to route log messages to these handlers. As the Python.org documentation notes, there are four basic classes defined by the Python logging module: Loggers, Handlers, Filters, and Formatters. We’ll provide more details on these below.

Getting Started with Python Logs

There are a number of important steps to take when setting up your logs. First, you need to ensure logging is enabled in the applications you use. You also need to categorize your logs by name so they are easy to maintain and search. Naming the logs makes it easier to search through large log files, and to use filters to find the information you need.

To send log messages in Python, request a logger object. It should have a unique name to help filter and prioritize how your Python application handles various messages. We are also adding a StreamHandler to print the log on our console output. Here’s a simple example:

import logging

logging.basicConfig(handlers=[logging.StreamHandler()])

log = logging.getLogger('test')

log.error('Hello, world')

This outputs:

ERROR:test:Hello, world

This message consists of three fields. The first, ERROR, is the log level. The second, test, is the logger name. The third field, “Hello, world”, is the free-form log message.

Most problems in production are caused by unexpected or unhandled issues. In Python, such problems generate tracebacks where the interpreter tries to include all important information it could gather. This can sometimes make the traceback a bit hard to read, though. Let’s look at an example traceback. We’ll call a function that isn’t defined and examine the error message.

def test():

nofunction()

test()

Which outputs:

Traceback (most recent call last):

File '<stdin>', line 1, in <module>

File '<stdin>', line 2, in test

NameError: global name 'nofunction' is not defined

This shows the common parts of a Python traceback. The error message is usually at the end of the traceback. It says “nofunction is not defined,” which is what we expected. The traceback also includes the lines of all stack frames that were touched when this error occurred. Here we can see that it occurred in the test function on line two. Stdin means standard input and refers to the console where we typed this function. If we were using a Python source file, we’d see the file name here instead.

Configuring Logging

You should configure the logging module to direct messages to go where you want them. For most applications, you will want to add a Formatter and a Handler to the root logger. Formatters let you specify the fields and timestamps in your logs. Handlers let you define where they are sent. To set these up, Python provides a nifty factory function called basicConfig.

import logging

logging.basicConfig(format='%(asctime)s %(message)s',

handlers=[logging.StreamHandler()])

logging.debug('Hello World!')

By default, Python will output uncaught exceptions to your system’s standard error stream. Alternatively, you could add a handler to the excepthook to send any exceptions through the logging module. This gives you the flexibility to provide custom formatters and handlers. For example, here we log our exceptions to a log file using the FileHandler:

import logging

import sys

logger = logging.getLogger('test')

fileHandler = logging.FileHandler('errors.log')

logger.addHandler(fileHandler)

def my_handler(type, value, tb):

logger.exception('Uncaught exception: {0}'.format(str(value)))

# Install exception handler

sys.excepthook = my_handler

# Throw an error

nofunction()

Which results in the following log output:

$ cat errors.log

Uncaught exception: name 'nofunction' is not defined

None

In addition, you can filter logs by configuring the log level. One way to set the log level is through an environment variable, which gives you the ability to customize the log level in the development or production environment. Here’s how you can use the LOGLEVEL environment variable:

$ export LOGLEVEL='ERROR'

$ python

>>> import logging

>>> logging.basicConfig(handlers=[logging.StreamHandler()])

>>> logging.debug('Hello World!') #prints nothing

>>> logging.error('Hello World!')

ERROR:root:Hello World!

Logging from Modules

Modules intended for use by other programs should only emit log messages. These modules should not configure how log messages are handled. A standard logging best practice is to let the Python application importing and using the modules handle the configuration.

Another standard best practice to follow is that each module should use a logger named like the module itself. This naming convention makes it easy for the application to distinctly route various modules and helps keep the log code in the module simple.

You need just two lines of code to set up logging using the named logger. Once you do this in Python, the “ name” contains the full name of the current module, and will work in any module. Here’s an example:

import logging

log = logging.getLogger(__name__)

def do_something():

log.debug('Doing something!')

Analyzing Your Logs with Papertrail

Python applications on a production server contain millions of lines of log entries. Command line tools like tail and grep are often useful during the development process. However, they may not scale well when analyzing millions of log events spread across multiple servers.

Centralized logging can make it easier and faster for developers to manage a large volume of logs. By consolidating log files onto one integrated platform, you can eliminate the need to search for related data that is split across multiple apps, directories, and servers. Also, a log management tool can alert you to critical issues, helping you more quickly identify the root cause of unexpected errors, as well as bugs that may have been missed earlier in the development cycle.

For production-scale logging, a log management tool such as Solarwinds® Papertrail™ can help you better manage your data. Papertrail is a cloud-based platform designed to handle logs from any Python application, including Django servers.

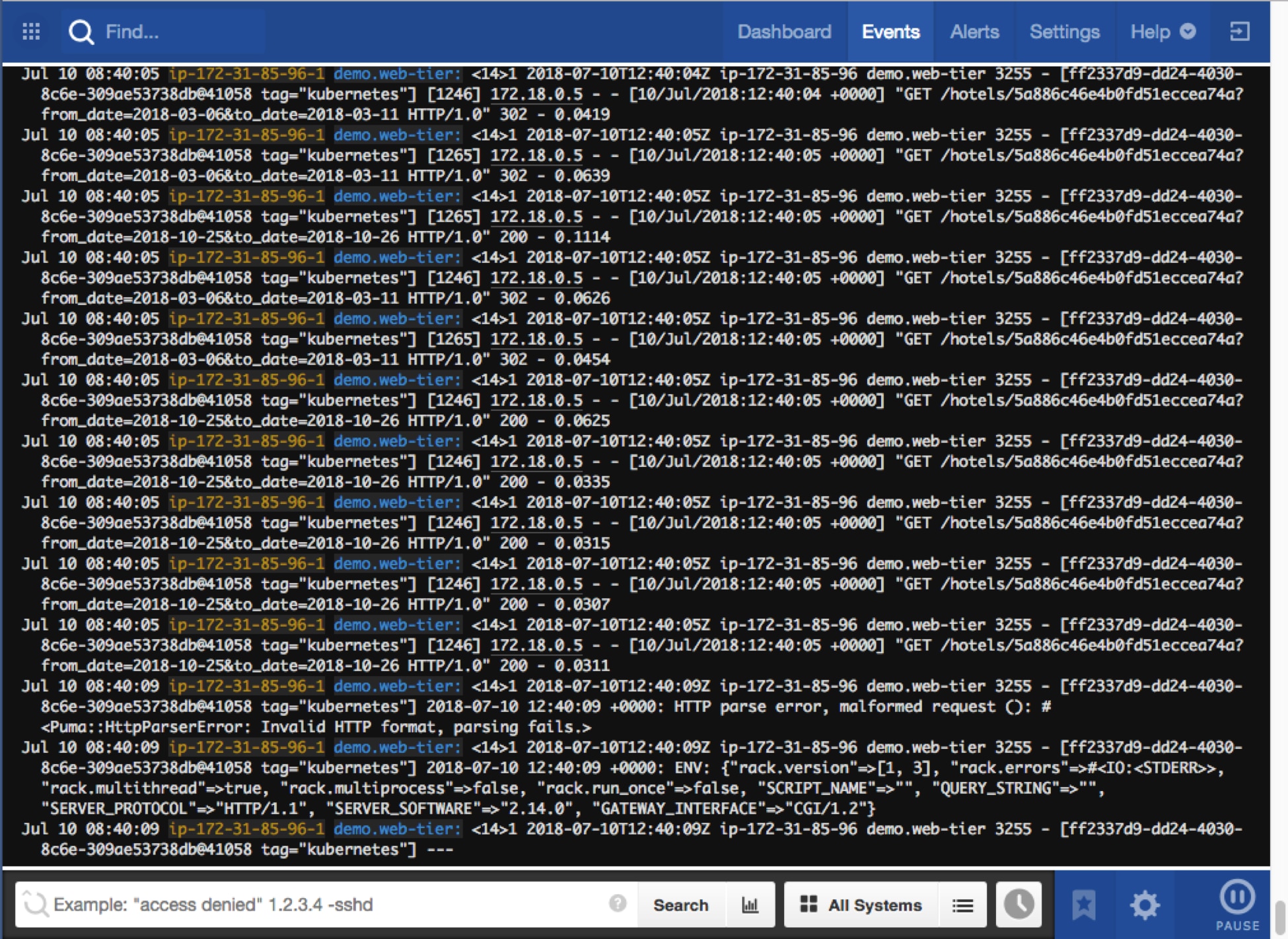

The Papertrail solution provides a central repository for event logs. It helps you consolidate all of your Python logs using syslog, along with other application and database logs, giving you easy access all in one location. It offers a convenient search interface to find relevant logs. It can also stream logs to your browser in real time, offering a “live tail” experience. Check out the tour of the Papertrail solution’s features.

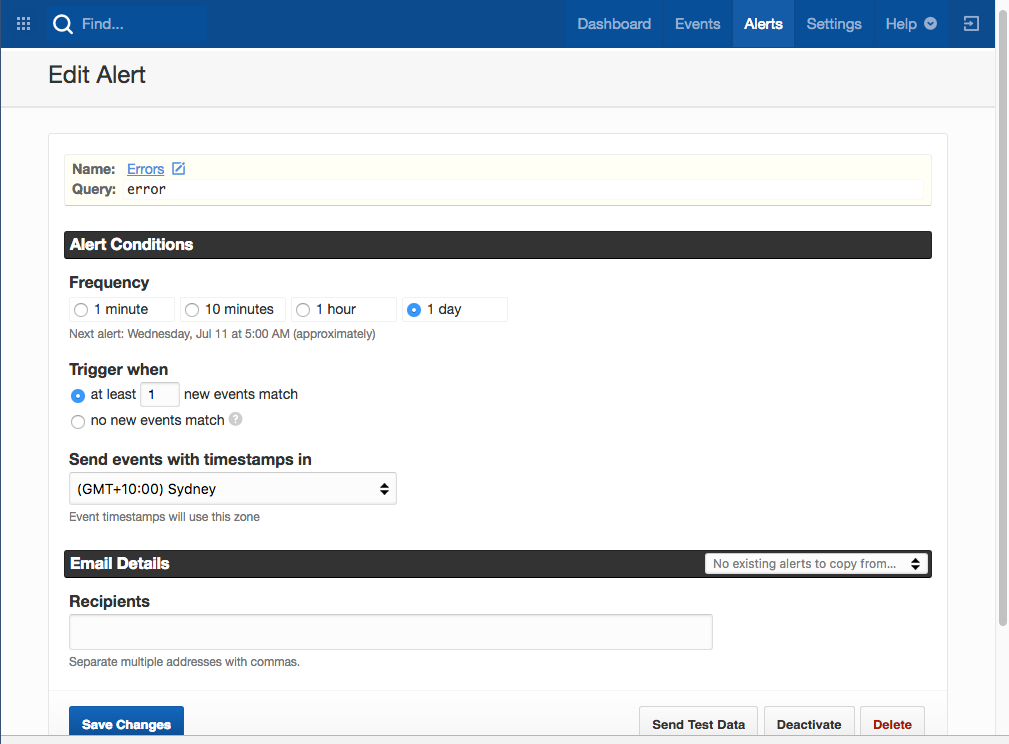

Papertrail is designed to help minimize downtime. You can receive alerts via email, or send them to Slack, Librato, PagerDuty, or any custom HTTP webhooks of your choice. Alerts are also accessible from a web page that enables customized filtering. For example, you can filter by name or tag.

Configuring Papertrail in Your Application

There are many ways to send logs to Papertrail depending on the needs of your application. You can send logs through journald, log files, Django, Heroku, and more. We will review the syslog handler below.

Python can send log messages directly to Papertrail with the Python SysLogHandler. Just set the endpoint to the log destination shown in your Papertrail settings. You can optionally format the timestamp or set the log level as shown below.

import logging

import socket

from logging.handlers import SysLogHandler

syslog = SysLogHandler(address=('logsN.papertrailapp.com', XXXXX))

format = '%(asctime)s YOUR_APP: %(message)s'

formatter = logging.Formatter(format, datefmt='%b %d %H:%M:%S')

syslog.setFormatter(formatter)

logger = logging.getLogger()

logger.addHandler(syslog)

logger.setLevel(logging.INFO)

def my_handler(type, value, tb):

logger.exception('Uncaught exception: {0}'.format(str(value)))

# Install exception handler

sys.excepthook = my_handler

logger.info('This is a message')

nofunction() #log an uncaught exception

Conclusion

Python offers a well-thought-out framework for logging that makes it simple to enable and manage your log files. Getting started is easy, and a number of tools baked into Python automate the logging process and help ensure ease of use.

Papertrail adds even more functionality and tools for diagnostics and analysis, enabling you to manage your Python logs on a centralized cloud server. Quick to setup and easy to use, Papertrail consolidates your logs on a safe and accessible platform. It simplifies your ability to search log files, analyze them, and then act on them in real time—so that you can focus on debugging and optimizing your applications.

Learn more about how Papertrail can help optimize your development ecosystem.